Why Machine Vision Is Flawed in the Same Way as Human Vision

Deep convolutional neural networks have taken the world of artificial intelligence by storm. These machines now routinely outperform humans in tasks ranging from face and object recognition to playing the ancient game of Go.

The irony, of course, is that neural networks were inspired by the structure of the brain. It turns out that there are remarkable similarities between the broader structure of the deep convolutional neural networks behind machine vision and the structure of the brain responsible for vision. One of these evolved over millions of years, the other came about over the course of just a few decades. But both seem to work in the same way.

And that raises an interesting question—if machine vision and human vision work in similar ways, are they also restricted by the same limitations? Do humans and machines struggle with the same vision-related challenges?

Today we get an answer thanks to the work of Saeed Reza Kheradpisheh at the University of Tehran in Iran and a few pals from around the world. These guys have tested humans and machines with the same vision challenges and discovered that they do indeed struggle with the same kind of problems.

First some background. The pathway in the brain responsible for vision operates in several layers, each of which is thought to extract progressively more information from an image, such as movement, shape, color, and so on. Each layer consists of huge numbers of neurons connected into a vast network.

Deep convolution neural networks have a similar structure. They too are made up of layers, and each of these is a network of circuits designed to mimic the behavior of neurons, hence the term neural network.

Through much trial and error, computer scientists have found that these layers perform best when each extracts progressively more information about an image. And when they look at the behavior of layers individually, they find remarkable similarities to the function of specific layers in the brain.

But while the human brain is good at object recognition, it is not perfect. Change the image in some way and it is not always easy to recognize the object it contains.

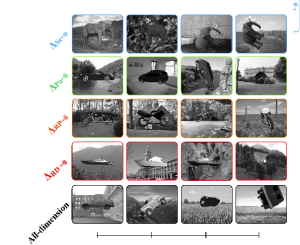

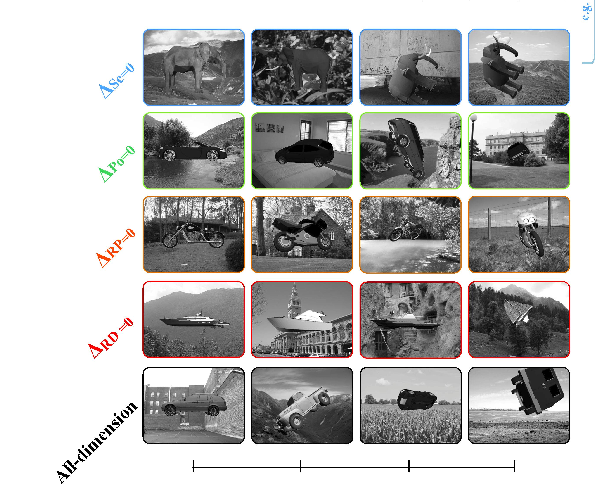

Imagine a picture of a car taken from the side, for example. There are various ways this image can be changed. One is to translate the object, to move it from one part of the image to another. Another is to enlarge or shrink it.

Then there are two types of rotation. One is an “in plane” rotation that shows the car from the side but upside down, for example.

There are also rotations in depth. In this case, you have to imagine the car as a 3-D object seen from the side. Rotating in depth then shows the car from the front, the back or a three-quarter view and so on.

But given two pictures of the same car from different viewpoints, how hard is it to be sure that both show the same vehicle? Clearly some types of distortion are more challenging than others—but which? And do machines have the same trouble?

To find out, Kheradpisheh and co produced image variations of four different kinds of objects and then tested how well humans and deep convolutional neural networks cope with the task of recognizing them.

The test for humans involves picking an image at random and displaying it on a screen for 12.5 microseconds. The subject then has to press one of four buttons to indicate whether image is of a car, ship, motorcycle, or animal.

The team tested a total of 89 different humans who each viewed 960 images. The researchers used the speed and accuracy of each subject’s response as a measure of how well they recognized each object.

The team also carried out an equivalent test on two of the most powerful deep convolutional networks used for object recognition, one developed at the University of Toronto in Canada and the other at the University of Oxford in the U.K.

The results make for interesting reading. “We found that humans and DCNNs largely agreed on the relative difficulties of each kind of variation,” say Kheradpisheh and co. “Rotation in depth is by far the hardest transformation to handle, followed by scale, then rotation in plane, and finally position (much easier).”

That’s interesting work with has some immediate implications. For a start, computer scientists will need to be much more careful in the way they create databases for testing machine vision. In future they’ll need to control for the factors that machines find harder.

But it also shows the potential for deep convolutional neural networks to help probe the way human cognition works. The design of certain images is a critical task in applications such as air traffic control, emergency exits, instructions for the use of lifesaving equipment and so on.

Using humans to evaluate these images is a time-consuming and expensive business. But perhaps these kinds of neural networks could do the work instead or at least screen out the worst examples and leaving humans with a much better defined and less onerous task.

Beyond that, it may be possible to design machine-vision systems that aren’t fooled in the same way humans and so could augment human decision making in critical situations such as driving.

And that’s just the start. Neural networks are already revolutionizing all kinds of tasks that used to be the preserve of humans—that change is only going to accelerate.

Ref: arxiv.org/abs/1604.06486 : Humans and Deep Networks Largely Agree on Which Kinds of Variation Make Object Recognition Harder

Leave a Reply