Event Camera Helps Drone Dodge Thrown Objects

Impressive, right? As far as the drone is concerned, this is a clever way of mimicking obstacle encounters in high-speed flight, since it’s relative velocity that’s important. Also, the researchers say that in each case, motion capture data confirms that “the ball would have hit the vehicle if the avoidance maneuver was not executed.”

The time it takes a robot (of any kind) to avoid an obstacle is constrained primarily by perception latency, which includes perceiving the environment, processing those data, and then generating control commands. Depending on what sensor you’re using, what algorithm you’re using, and what computer you’re using, typical perception latency is anywhere from tens of milliseconds to hundreds of milliseconds. The sensor itself is usually the biggest contributor to this latency, which is what makes event cameras so appealing—they can spit out data with a theoretical latency measured in nanoseconds.

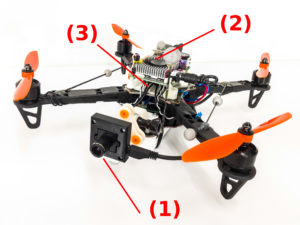

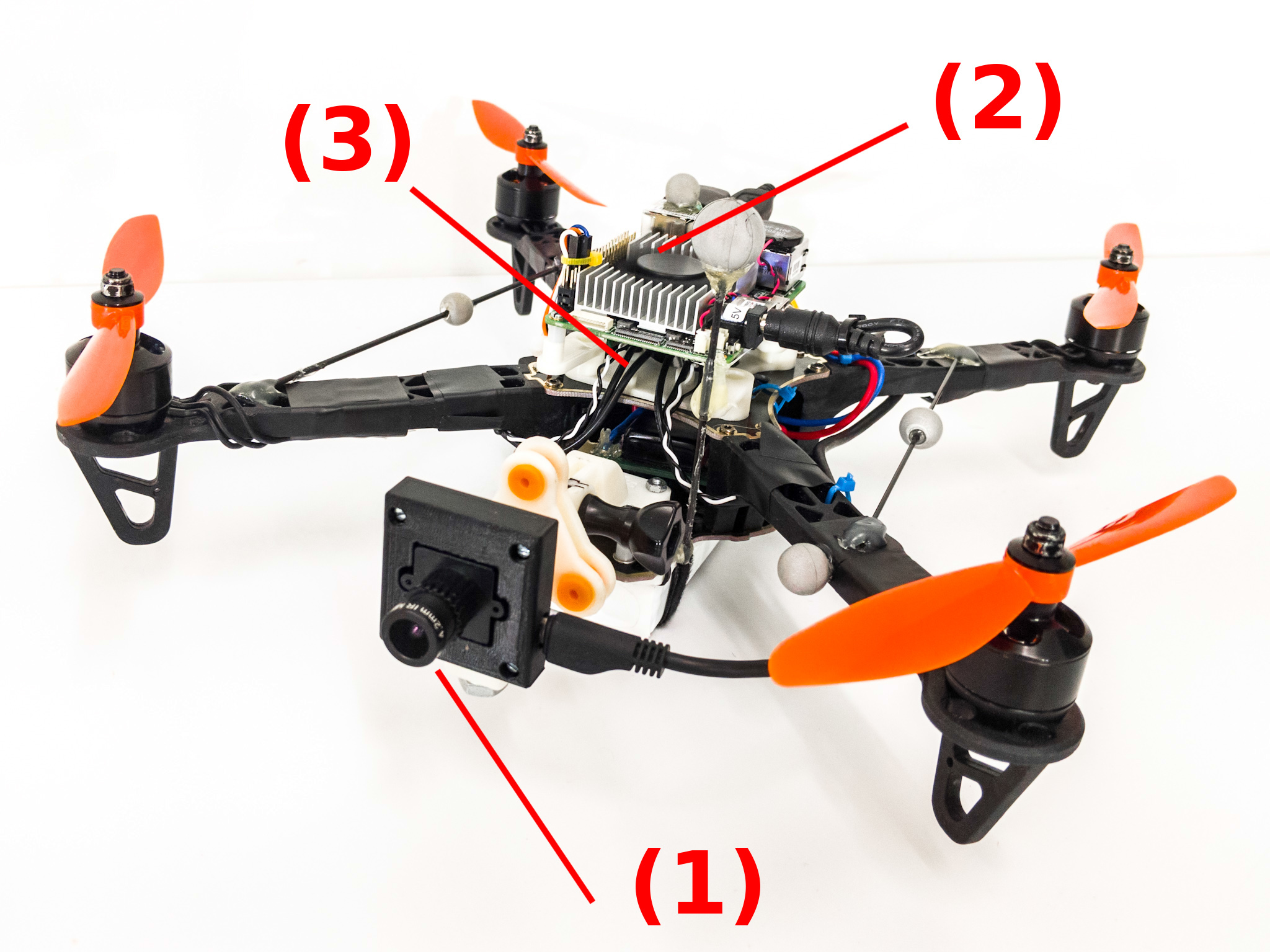

The question that the University of Zurich researchers want to answer is how much the perception latency actually affects the maximum speed at which a drone can move while still being able to successfully dodge obstacles. Comparing the kind of traditional vision sensors that you’d find on a research-grade quadrotor (both mono and stereo cameras) with an event camera, it turns out that the difference is actually not all that significant, as long as you’re dealing with a quadrotor that’s not moving too quickly. As the speed of the quadrotor increases, though, event cameras can start to make a difference—a quadrotor with a thrust to weight ratio of 20, for example, could achieve maximum safe obstacle avoidance speeds that are about 12 percent higher than if it was using a traditional camera. Quadrotors this powerful don’t exist yet (maximum thrust to weight ratios are closer to 10), but we’re getting there.

It’s perhaps a little surprising that event cameras don’t offer a more significant benefit in latency quadrotors at lower speeds, but it’s important to remember that event cameras are pretty cool for other reasons as well: They don’t suffer from motion blur, and they’re much more resilient to lightning conditions, able to work just fine in the dark as well as when you’re dealing with high dynamic range, like looking into the sun. As the speed and agility of drones increases, and especially if we want to start using them in unstructured environments for practical purposes, it sure seems like event cameras will be the way to go.

“How Fast is Too Fast? The Role of Perception Latency in High-Speed Sense and Avoid,” by Davide Falanga, Suseong Kim, and Davide Scaramuzza from the University of Zurich, has been accepted to IEEE Robotics and Automation Letters.

[ RPG ]

Leave a Reply